Often when working with an embedded device, it’s easy to think of the device or firmware as a single, homogeneous, black-box system. Inputs from some array of peripherals come in, are processed by some code, and some sort of outputs are displayed or actions performed.

How the device achieves its goals isn’t an issue as long as it gets the job done and performs well.

Designing a system with this monolithic mindset, however, not only takes more time and money to build, but actually can make for a less robust system. It’s a far better design to break up the system into modular components that can be developed and tested independently.

Modular software design works as well in theory as it does in practice. On two projects I’ve been on, we used modular design to build and test software solutions.

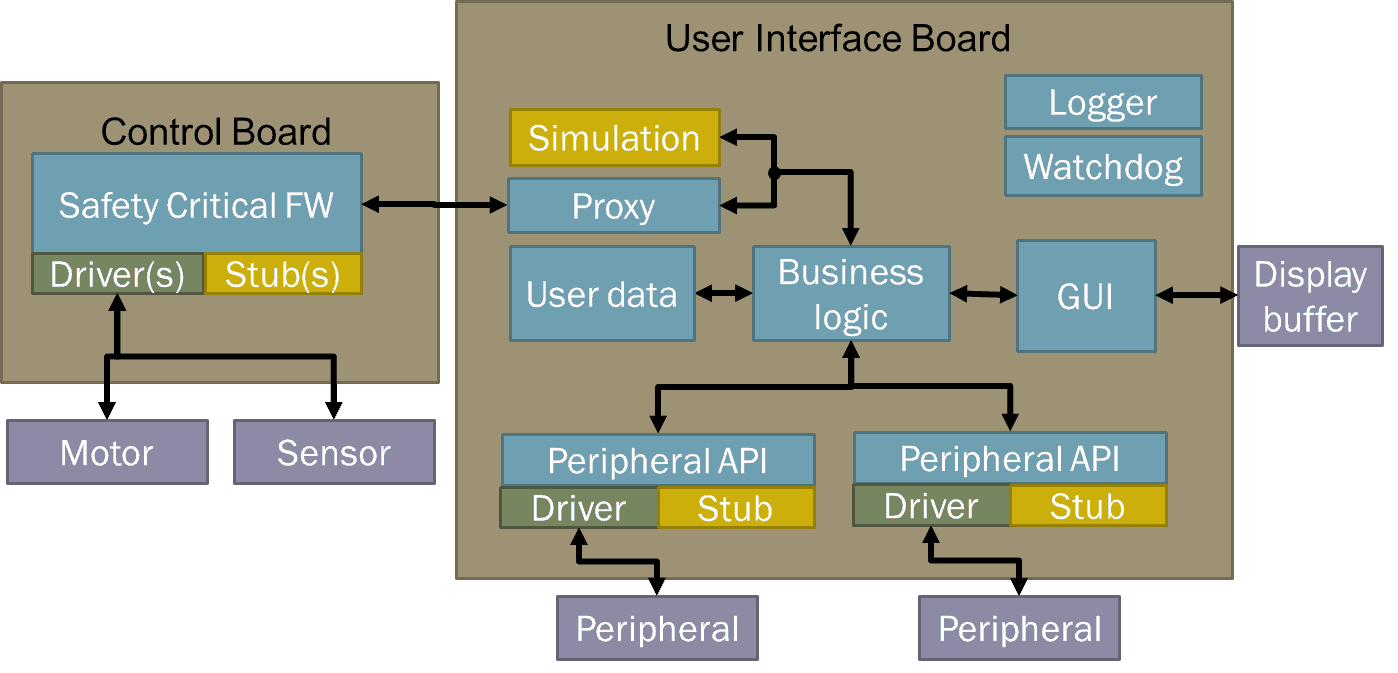

First, there were two primary boards of each project’s device: the control board which handled all safety critical operations, including monitoring and controlling the core peripherals, and the user interface board that handled some business logic, user configurations, and user GUI interactions. The control board ran either bare metal or a lightweight embedded OS; the user board ran an embedded Linux variant.

Separating the functionality this way allowed developers to work in parallel, and made development cheaper and quicker by eliminating the need to test the user interface to the same rigorous safety standards as the control board. Meanwhile, the user interface leveraged higher level Linux and C++ capabilities that would have been more difficult to certify, all while maintaining the same base reliability of the overall system.

On the Linux side, we isolated all major peripherals into their own interfaces or Linux daemons. This not only allowed us to develop and test the peripherals independently but the ability to stub them out.

For each of the major device peripherals, including the bus interface that connected the control and user boards, we created simple stub applications where we could simulate device inputs or outputs. As a result, we could run and test nearly the whole system cross-compiled without any actual hardware connected. No more need to repeatedly flash the latest development build to the board each time we wanted to try things: we could instead run and debug the code in a simulator from the comfort of a virtual machine on our laptops, even before the final revisions of the hardware were done. And all the while we could easily monitor or inject messages between applications in real-time to aid in development and debugging. We could even mix and match which components ran on the target and in the simulated environment.

This certainly was a huge time savings for development!

With each application isolated to its own environment, each subsystem could be tested independently against the interface control documents we defined. While testing the application, we could easily use tools to monitor, record, or play back inter-process communication in order to understand how an application was working or reproduce a bug scenario.

For one project we created an application that linked to our custom messaging library that could create and inject a list of messages via a GUI for testing in order to exercise a subsystem or simulate an event on a stubbed out peripheral. For another, we used JSON over MQTT for our communication protocol and could monitor all system traffic or inject new messages from one central broker.

This modular design made testing significantly smoother than it could have been otherwise.

In the software world, we often talk about having “clean code,” of which one aspect is that each function or file serves a single purpose rather than trying to be a Swiss-army knife that does anything and everything. This not only applies to code within an application but a system as a whole.

Instead of having one executable run the whole show, it’s better to design several smaller applications that handle certain subfunctions. This modular approach can apply to hardware, too, where one board is responsible for processing certain data and events and another handles other functions.

The first step in building a modular design is to isolate safety-critical functions from the rest of the system. This doesn’t mean that the rest of the system can be lax in security, reliability or performance, but when trying to meet stringent certification standards for devices in medical or aeronautical fields, the process becomes much easier and cheaper if it’s modular.

It’s significantly easier to prove, for example, that a device’s overheat protection logic is robust when you don’t have to worry that an unrelated error in parsing a user’s configuration file could bring down the system.

The second area to look into modularizing systems is with regard to peripherals. If you’re interfacing with some other piece of hardware, it’s better to have a clean interface to the device that other parts of the application can use through an abstraction layer rather than interacting with the device directly. This ensures all error handling of device inputs can be validated in a central spot and other areas of the system can use the peripheral in a consistent manner.

An added benefit of isolating peripheral code is that ability to stub out hardware interactions, allowing developers and testers to work with the rest of the system in an emulated environment.

In addition to peripherals, business logic can be broken down into separate micro-applications, too. Rather than relying on a single event loop processing a subsystem, a system can delegate certain tasks to other applications, such as loading or saving user data, playing audio cues or managing log files. Going for a full model-view-controller approach, a system can even separate core business logic and data from the GUI upon which it’s displayed.

An additional process can monitor all the other apps, too, and reboot them like a watchdog should any subsystem malfunction, so only one piece of the whole system crashes rather than the whole thing going down under some unexpected catastrophic failure.

When designers break up a system into smaller independent chunks, the complexity of testing greatly diminishes. Suppose one module has three operating modes: A, B, and C; and another module has four states it can be in: 1, 2, 3, and 4. If these were coupled and dependent on each other, we’d need at minimum 12 test cases. But if we can isolate them and make them independent, then the minimum number of tests is only 7, the sum of the states instead of the product.

It’s true that some small embedded devices might suffer too much overhead from a modular design, but most embedded systems today have sufficient computing resources to sacrifice a marginal overhead in exchange for dramatically decreased development costs, ramped up robustness and reliability, and more maintainable code. It’s the same reasons developers rarely code directly in assembly languages anymore: the minor potential performance gains are offset by a nightmare of unmaintainable, non-robust systems.

In software development, we try to avoid “spaghetti code,” so why would we design “spaghetti systems?”

In my next post, I’ll dive into the details of how to handle the communication between these different subsystem silos. But for now, remember that isolated, modular subsystems are not only cheaper to develop and test, but they can be more reliable too. Or, to repurpose the lyrics of a certain famous song: “I am a rock. I am an island.”

Click the button below to read the follow-up to this post, “Communication Protocol Considerations Key to Modular Software Development.”